This article introduces AgentScope Java 2.0, an open-source framework for building distributed, enterprise-grade AI agents with production-ready features.

This article introduces the launch of Qwen3.7-Plus multimodal model, an AI swine diagnosis assistant with Muyuan Group, and Model Studio's open-source CLI for AI agents.

This article introduces Qwen-VLA, a general-purpose Vision-Language-Action model that extends multimodal perception and reasoning into continuous action generation for embodied intelligence.

This article introduces Alibaba Cloud SAE, a serverless platform that simplifies application modernization and accelerates AI deployment with zero node management.

Choosing how to deploy a large language model in production is one of the most consequential — and confusing — decisions an AI team can make.

This article introduces a production-grade AI Agent runtime platform combining ACS Agent Sandbox for security and LoongCollector for observability.

This article introduces Tair-KVCache-HiSim, a high-fidelity CPU-based simulator for optimizing multi-tier KV Cache configurations in LLM inference.

This article introduces hierarchical sparse attention: the full KV Cache is stored on the CPU, while the GPU keeps only a Top-k LRU Buffer.

This article introduces a dual memory-pool inference framework enabling efficient hybrid Transformer-Mamba model execution by resolving conflicting caching mechanisms.

This article introduces engineering optimizations to 3FS—KVCache's foundation layer—across performance, productization, and cloud-native management for scalable AI inference.

This article introduces Apache RocketMQ's strategic evolution into an AI-native message engine for long-running sessions, intelligent compute scheduling, and agent collaboration.

Alibaba Cloud unveiled a new AI model subscription service specifically for enterprises and developers.

This article introduces how to build a stable, reliable, and efficient real-time speech message link architecture using the LiteTopic feature of ApsaraMQ for RocketMQ.

Qwen Conference 2026 invites you to step inside the innovation.

PolarDB for PostgreSQL introduces a one-stop memory management system that combines vector and graph databases to enable AI agents with persistent, cross-session memory.

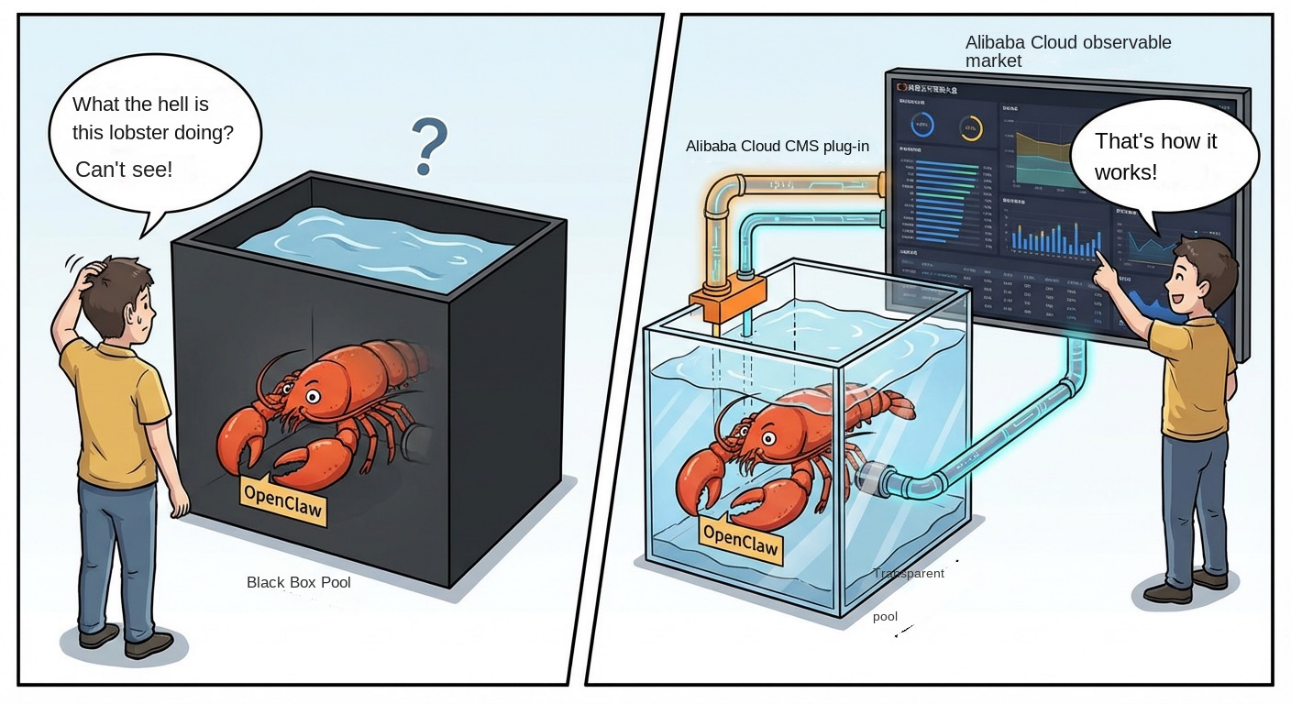

This article introduces openclaw-cms-plugin 0.1.2, which enables accurate multi-turn tracing for AI agents by reconstructing ReAct execution flows and stabilizing concurrent observability.

We are excited to introduce Qwen-Scope, an interpretability toolkit trained on the Qwen3 and Qwen3.5 series models.

This article introduces a unified observability architecture for cross-cloud log analysis and AIOps, designed to streamline multicloud O&M and reduce costs for global enterprises.

This article introduces three top-conference-accepted research achievements by Alibaba Cloud that solve core AIOps challenges in data augmentation, se...

One-command observability integration makes OpenClaw AI agent operations transparent via Alibaba Cloud monitoring plugins.