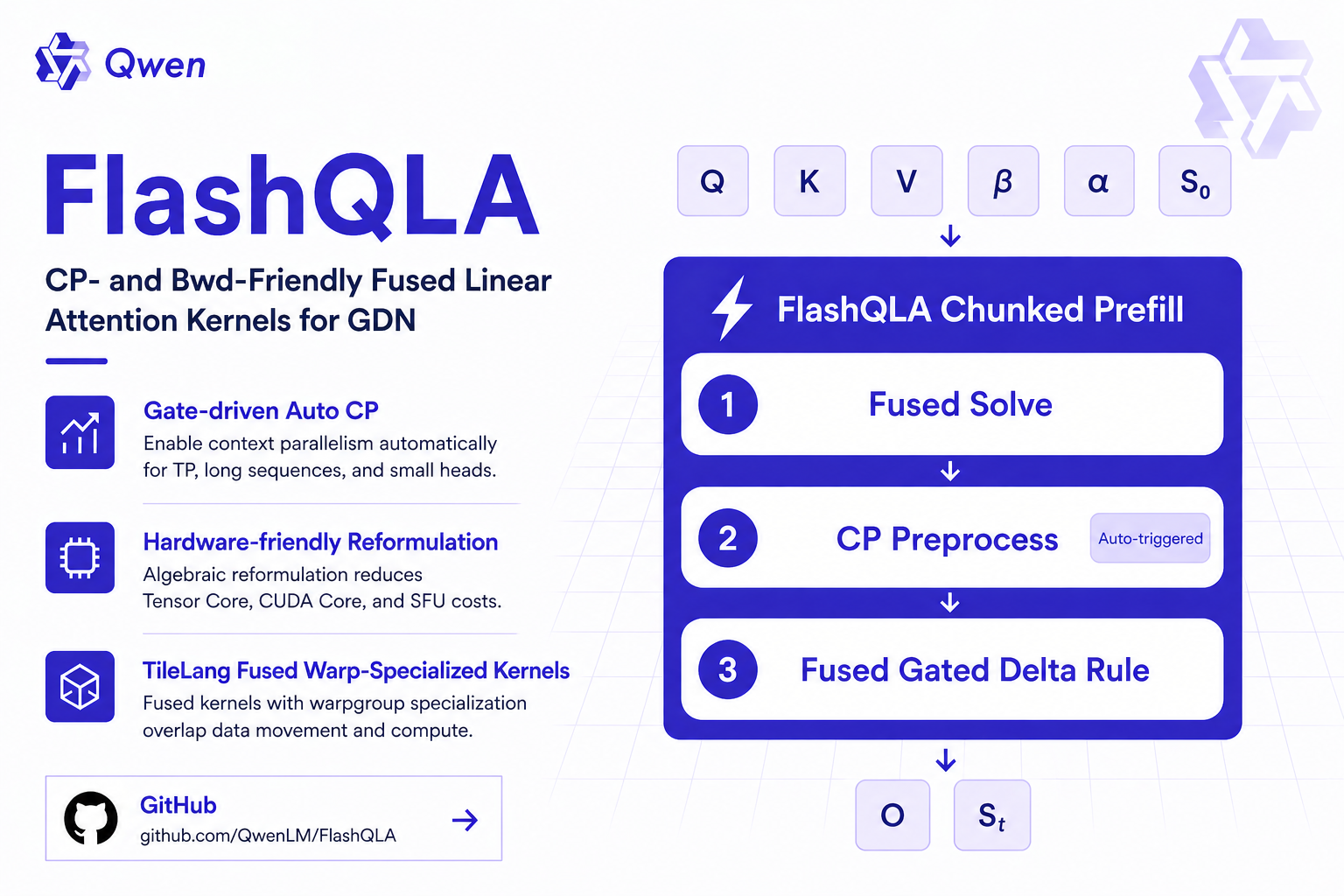

We officially open-source FlashQLA: a high-performance linear attention kernel library built on TileLang.

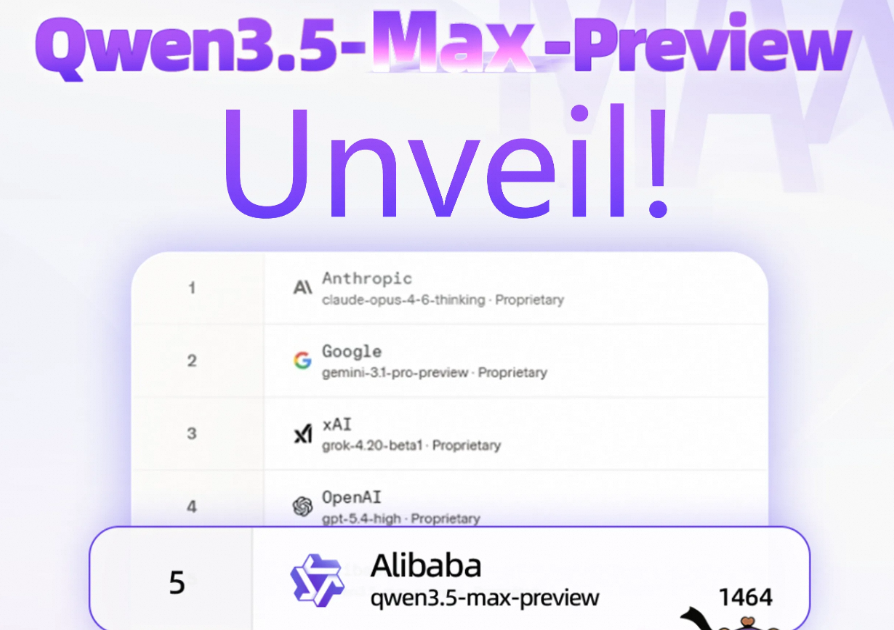

Recently, Qwen3.5-Max-Preview, the preview of our next-generation flagship model, has made its debut on LM Arena.

We are delighted to announce the official release of Qwen3.5, introducing the open-weight of the first model in the Qwen3.