This article introduces building AI-powered recommendation systems on Alibaba Cloud using PAI, AIRec, and PAI-Rec for personalized, low-latency user experiences.

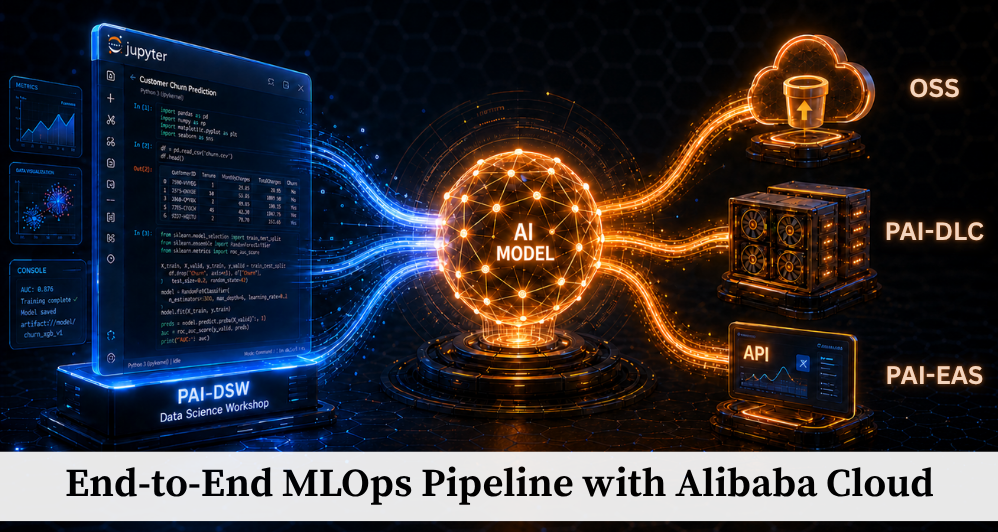

This article examines how Alibaba Cloud's PAI-DSW, PAI-DLC, OSS, and PAI-EAS form a complete, production-viable MLOps pipeline and the configuration d.

This article introduces how to automate end-to-end ML pipeline deployment on Alibaba ACK using KitOps for packaging and CI/CD integration.

This article introduces the theoretical aspects of containerization and how to choose the proper cloud vendor.

This article follows up on two previous articles and explains how to run the Machine Learning Model on Docker.

Part 2 of this series explains installing NVIDIA-Docker and pulling Tenforflow GPU Docker images.

Part 1 of this series describes creating and preparing an environment to train or inference the AI model.

This article will focus on the machine learning (ML) pipeline of machine/deep learning infrastructure operations (MLOps).